StewReads Local: Turn Your Favorite AI Conversations into Ebooks Directly on Your Device

After building the remote StewReads connector, I wanted to create something that worked entirely on-device. Something that didn't require an account or a cloud backend, but still offered the same magic of turning AI conversations into beautifully formatted ebooks.

Introducing StewReads Local

StewReads Local is a lightweight MCP (Model Context Protocol) server that runs entirely on your machine. The code is available on GitHub and uses stdio for communication, making it a perfect companion for local AI clients like Claude Desktop.

Unlike the remote version, this server is designed with an offline-first philosophy. It's built in Python and leverages a bundled version of Pandoc to handle high-quality EPUB generation without needing any external APIs.

Why Run Local?

While the cloud version is great for convenience and OAuth2 integration, the local MCP server offers three distinct advantages:

- Absolute Privacy: Your conversations never leave your machine during the ebook generation process. The content is processed locally and turned into an EPUB file right where you are.

- Offline Reliability: You can generate ebooks even when your internet connection is spotty or non-existent.

- Zero Latency: Because everything happens on your hardware, the "stewing" process is nearly instantaneous.

A Learning Power-Up for Students & Professionals

For students and young professionals navigating new AI-enabled workflows, StewReads Local is a perfect tool for building a personal knowledge base. Instead of letting deep research sessions or complex technical explanations disappear into a chat history, you can "stew" them into a permanent library. It allows you to learn more effectively at your own pace, away from the distractions of a browser.

I'm studying the shift from subsistence to industrial farming. Can you summarize the key technological impacts and package it into an ebook for me?

Sample Ebook: From Hoe to Harvester: Farming's Technological Turn

Seamless Cross-Device Access

Despite being a local-first tool, you can still have your library everywhere. Since you can configure your output_dir in the StewReads settings, you can point it to a folder synced by iCloud, Dropbox, or Google Drive. Your generated ebooks will automatically sync to all your devices, giving you a cloud-enabled library with zero cloud-side processing of your private data.

How It Works

The local server exposes a set of tools to Claude that allow it to take the current conversation context and package it into a structured ebook.

The pipeline is entirely local:

- MCP Client (Claude Desktop) calls the local

stewreads-mcpexecutable. - Server receives the conversation markdown and metadata.

- Bundled Pandoc converts the markdown to a high-quality EPUB3 file.

- Local Storage: The EPUB is saved to your configured downloads folder.

- (Optional) Dispatch: If configured, the server emails the file directly to your Kindle.

Note on Kindle Delivery: If you're sending ebooks to your Kindle, make sure to add your "From" email address to your Amazon Approved Personal Document E-mail List. Without this step, Amazon will reject the delivery for security reasons.

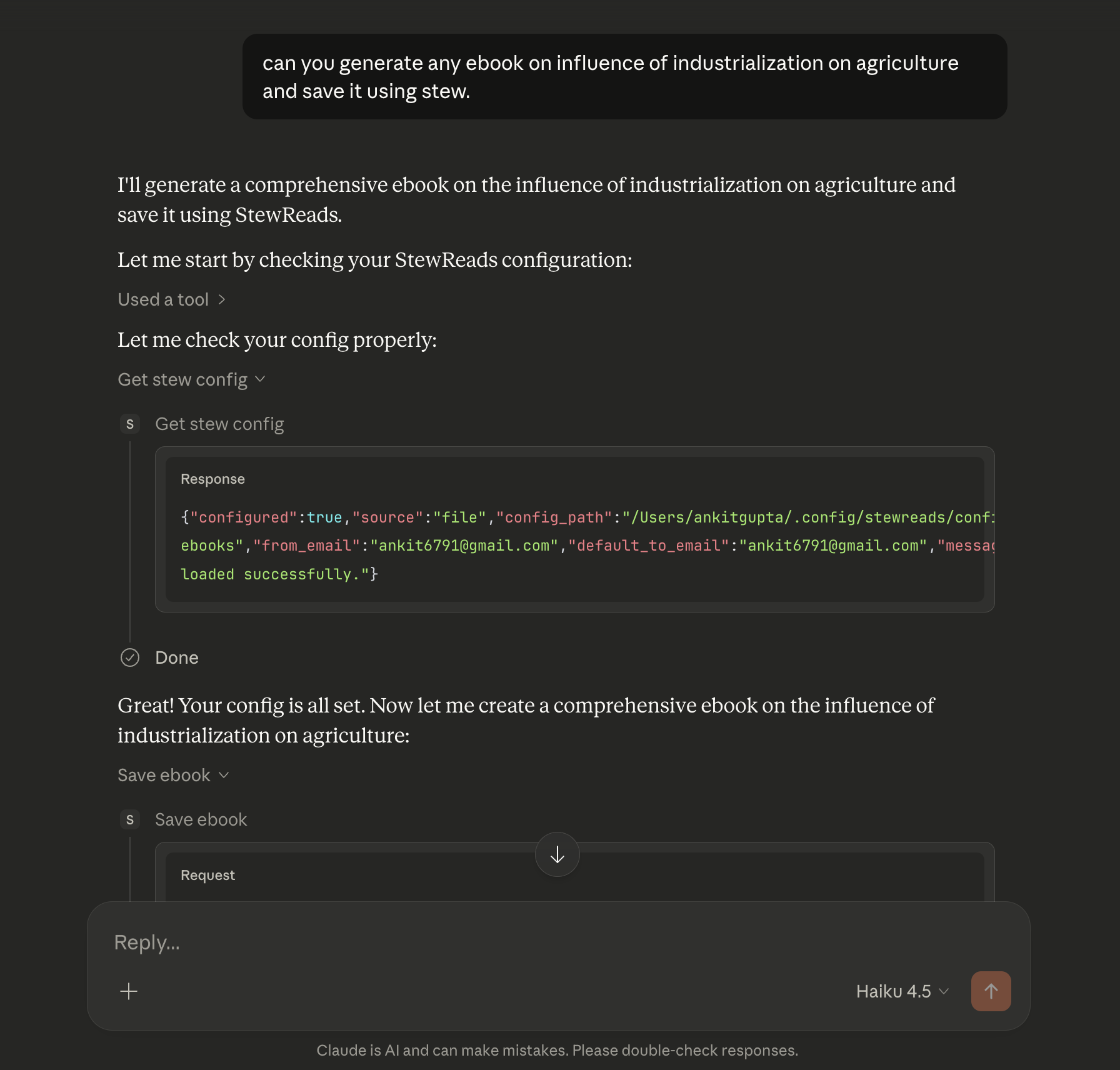

Here's what it looks like in action — asking Claude to stew a conversation into an ebook:

It's a simple, robust pipeline that proves you don't always need a complex backend to build powerful AI-assisted tools.

Setting It Up

Since it's an MCP server, you can add it to your claude_desktop_config.json in seconds. The recommended way is to install the stewreads-mcp package via uv:

uv tool install stewreads-mcp

Then add it to your Claude Desktop configuration:

{

"mcpServers": {

"stewreads": {

"command": "stewreads-mcp",

"env": {

"STEWREADS_CONFIG_PATH": "/Users/you/.config/stewreads/config.toml"

}

}

}

}

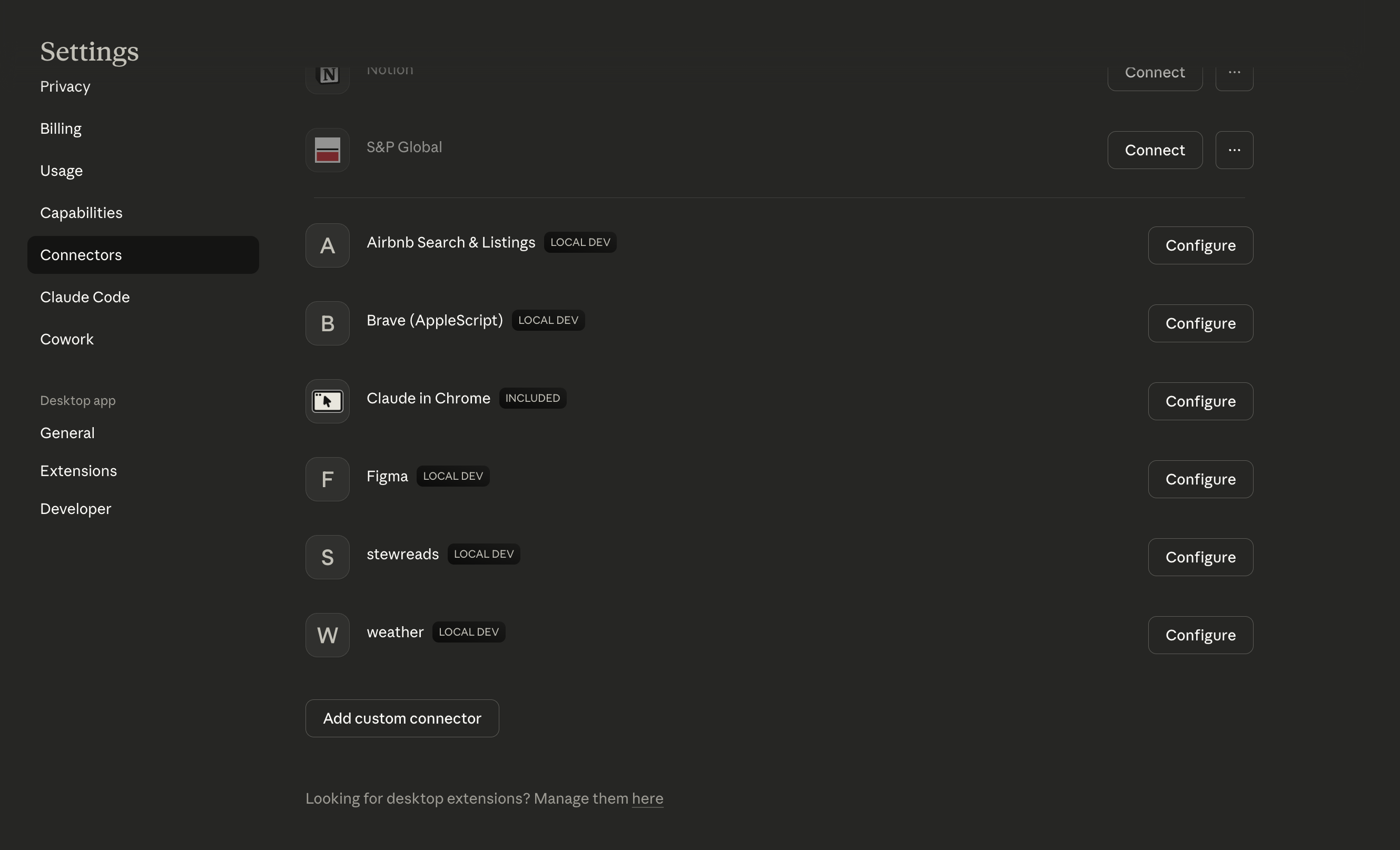

Once added, StewReads shows up in your Claude Desktop settings and its tools are immediately available in any conversation:

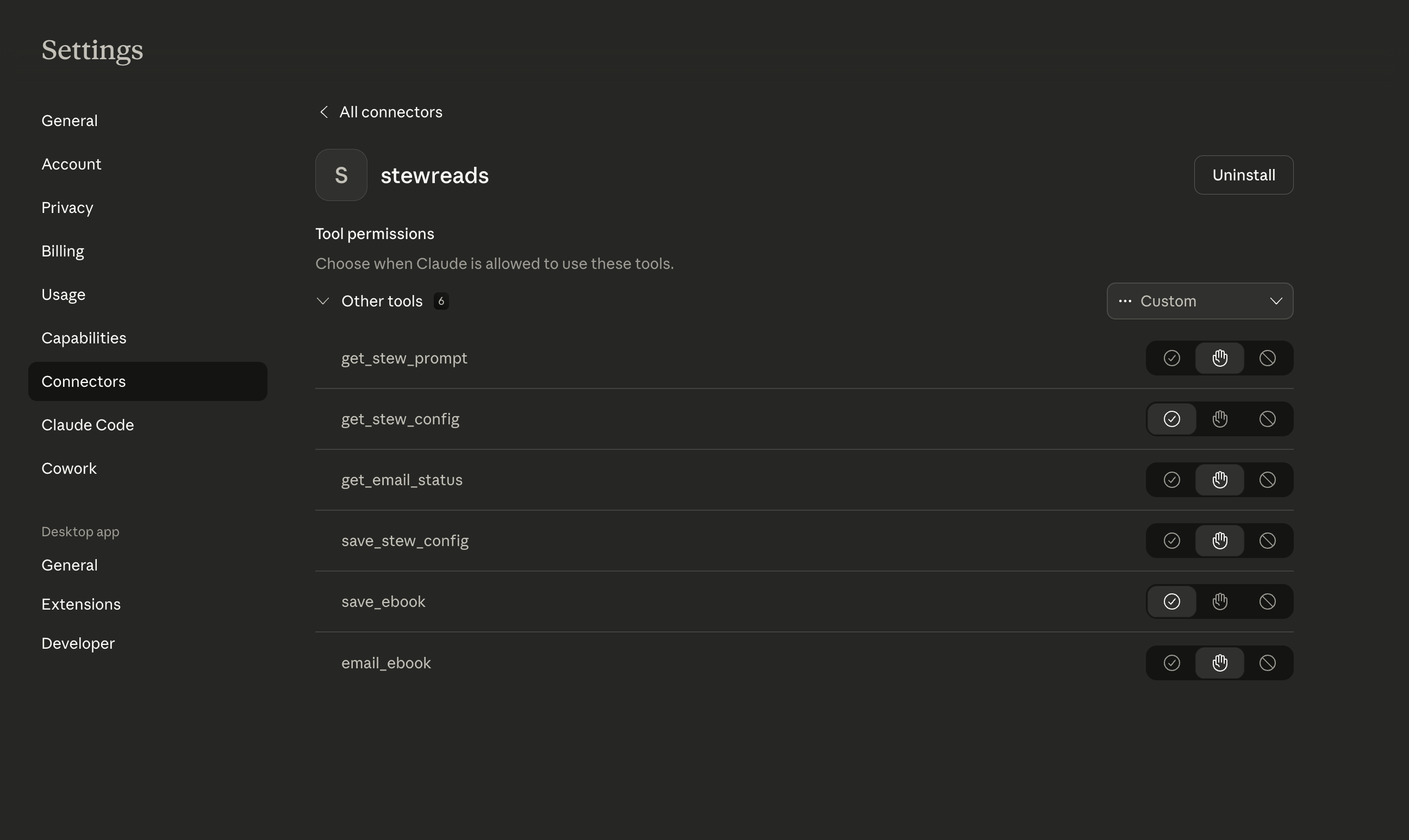

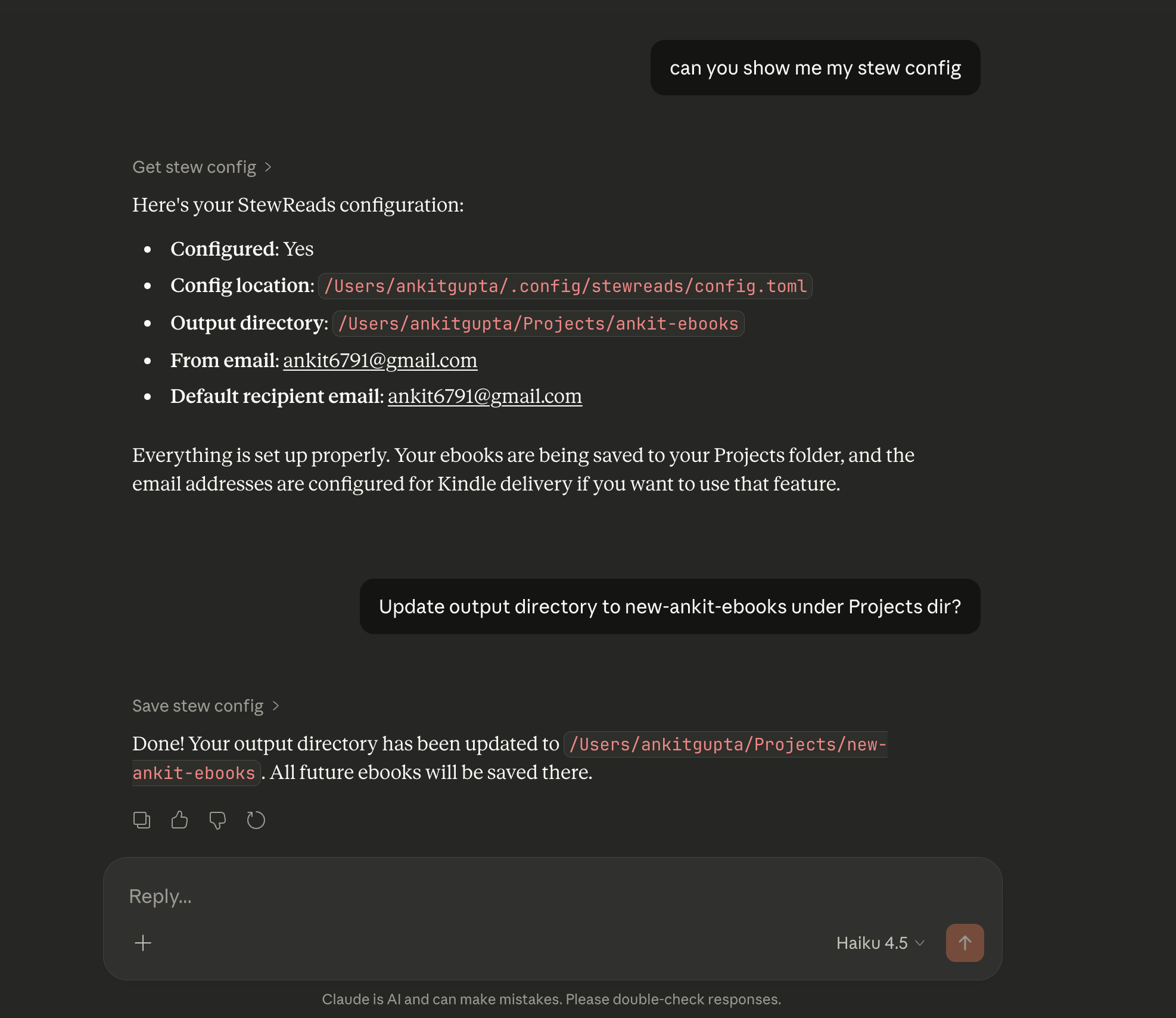

You can also view and update your config directly from Claude — no need to touch the TOML file manually:

You can check and update your StewReads config directly within the Claude interface.

The Future of Local MCP

Building this made me realize how much potential there is in local-first AI tools. We often think of AI as something that happens "out there" in the cloud, but the Model Context Protocol is bridging that gap, allowing us to bring those capabilities back to our local environments.

By combining the privacy of local execution with the portability of cloud storage, we create a powerful foundation for modern generative AI software. It’s an experience that feels personal, permanent, and deeply integrated into how we actually work and learn. StewReads Local is just the beginning of how I'm thinking about building these private, persistent AI artifacts.

Happy (Offline) Stewing!